Most Psychology findings are not replicable. What can be done? Stanford psychologist Michael Frank has an idea : Cumulative study sets with internal replication. ‘If I had to advocate for a single change to practice, this would be it.’ I took a look whether this makes any difference.

A recent paper in the journal Science has tried to replicate 97 statistically significant effects (Open Science Collaboration, 2015). In only 35 cases this was successful. Most findings were suddenly a lot weaker upon replication. This has led to a lot of soul searching among psychologists. Fortunately, the authors of the Science paper have made their data freely available. So, soul searching can be accompanied by trying out different ideas for improvements.

What can be done to solve Psychology’s replication crisis?

One idea to improve the situation is to demand study authors to replicate their own experiments in the same paper. Stanford psychologist Michael Frank writes:

If I had to advocate for a single change to practice, this would be it. In my lab we never do just one study on a topic, unless there are major constraints of cost or scale that prohibit that second study. Because one study is never decisive.* Build your argument cumulatively, using the same paradigm, and include replications of the key effect along with negative controls. […] If you show me a one-off study and I fail to replicate it in my lab, I will tend to suspect that you got lucky or p-hacked your way to a result. But if you show me a package of studies with four internal replications of an effect, I will believe that you know how to get that effect – and if I don’t get it, I’ll think that I’m doing something wrong.

Are internal replications the solution? No.

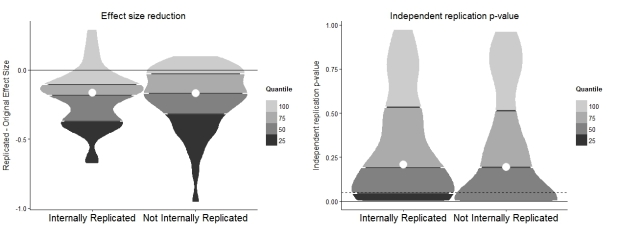

So, does the data by the reprocucibility project show a difference? I made so-called violin plots, thicker parts represent more data points. In the left plot you see the reduction in effect sizes from a bigger original effect to a smaller replicated effect. The reduction associated with internally replicated effects (left) and effects which were only reported once in a paper (right) is more or less the same. In the right plot you can see the p-value of the replication attempt. The dotted line represents the arbitrary 0.05 threshold used to determine statistical significance. Again, replicators appear to have had as hard a task with effects that were found more than once in a paper as with effects which were only found once.

If you do not know how to read these plots, don’t worry. Just focus on this key comparison. 29% of internally replicated effects could also be replicated by an independent team (1 effect was below p = .055 and is not counted here). The equivalent number of not internally replicated effects is 41%. A contingency table Bayes factor test (Gunel & Dickey, 1974) shows that the null hypothesis of no difference is 1.97 times more likely than the alternative. In other words, the 12 %-point replication advantage for non-replicated effects does not provide convincing evidence for an unexpected reversed replication advantage. The 12%-point difference is not due to statistical power. Power was 92% on average in the case of internally replicated and not internally replicated studies. So, the picture doesn’t support internal replications at all. They are hardly the solution to Psychology’s replication problem according to this data set.

The problem with internal replications

I believe that internal replications do not prevent many questionable research practices which lead to low replication rates, e.g., sampling until significant and selective effect reporting. To give you just one infamous example which was not part of this data set: in 2011 Daryl Bem showed his precognition effect 8 times. Even with 7 internal replications I still find it unlikely that people can truly feel future events. Instead I suspect that questionable research practices and pure chance are responsible for the results. Needless to say, independent research teams were unsuccessful in replication attempts of Bem’s psi effect (Ritchie et al., 2012; Galak et al., 2012). There are also formal statistical reasons which make papers with many internal replications even less believable than papers without internal replications (Schimmack, 2012).

What can be done?

In my previous post I have shown evidence for questionable research practices in this data set. These lead to less replicable results. Pre-registering studies makes questionable research practices a lot harder and science more reproducible. It would be interesting to see data on whether this hunch is true.

[update 7/9/2015: Adjusted claims in paragraph starting ‘If you do not know how to read these plots…’ to take into account the different denominators for replicated and unreplicated effects. Lee Jussim pointed me to this.]

[update 24/10/2015: Adjusted claims in paragraph starting ‘If you do not know how to read these plots…’ to provide correct numbers, Bayesian analysis and power comparison.]

— — —

Bem DJ (2011). Feeling the future: experimental evidence for anomalous retroactive influences on cognition and affect. Journal of personality and social psychology, 100 (3), 407-25 PMID: 21280961

Galak, J., LeBoeuf, R., Nelson, L., & Simmons, J. (2012). Correcting the past: Failures to replicate psi. Journal of Personality and Social Psychology, 103 (6), 933-948 DOI: 10.1037/a0029709

Gunel, E., & Dickey, J. (1974). Bayes Factors for Independence in Contingency Tables. Biometrika, 61(3), 545–557. http://doi.org/10.2307/2334738

Open Science Collaboration (2015). PSYCHOLOGY. Estimating the reproducibility of psychological science. Science (New York, N.Y.), 349 (6251) PMID: 26315443

Ritchie SJ, Wiseman R, & French CC (2012). Failing the future: three unsuccessful attempts to replicate Bem’s ‘retroactive facilitation of recall’ effect. PloS one, 7 (3) PMID: 22432019

Schimmack U (2012). The ironic effect of significant results on the credibility of multiple-study articles. Psychological methods, 17 (4), 551-66 PMID: 22924598

— — —

code for reproducing the figure (if you find mistakes, please tell me!):

## Estimating the association between internal replication and independent reproducibility of an effect

#Richard Kunert for Brain's Idea 5/9/2015

# a lot of code was taken from the reproducibility project code here https://osf.io/vdnrb/

# installing/loading the packages:

library(devtools)

source_url('https://raw.githubusercontent.com/FredHasselman/toolboxR/master/C-3PR.R')

in.IT(c('ggplot2','RColorBrewer','lattice','gridExtra','plyr','dplyr','httr','extrafont'))

#loading the data

RPPdata <- get.OSFfile(code='https://osf.io/fgjvw/',dfCln=T)$df

RPPdata <- dplyr::filter(RPPdata, !is.na(T.pval.USE.O),!is.na(T.pval.USE.R), complete.cases(RPPdata$T.r.O,RPPdata$T.r.R))#97 studies with significant effects

#prepare IDs for internally replicated effects and non-internally replicated effects

idIntRepl <- RPPdata$Successful.conceptual.replications.O > 0

idNotIntRepl <- RPPdata$Successful.conceptual.replications.O == 0

# Get ggplot2 themes predefined in C-3PR

mytheme <- gg.theme("clean")

#restructure data in data frame

dat <- data.frame(EffectSizeDifference = as.numeric(c(c(RPPdata$T.r.R[idIntRepl]) - c(RPPdata$T.r.O[idIntRepl]),

c(RPPdata$T.r.R[idNotIntRepl]) - c(RPPdata$T.r.O[idNotIntRepl]))),

ReplicationPValue = as.numeric(c(RPPdata$T.pval.USE.R[idIntRepl],

RPPdata$T.pval.USE.R[idNotIntRepl])),

grp=factor(c(rep("Internally Replicated Studies",times=sum(idIntRepl)),

rep("Internally Unreplicated Studies",times=sum(idNotIntRepl))))

)

# Create some variables for plotting

dat$grp <- as.numeric(dat$grp)

probs <- seq(0,1,.25)

# VQP PANEL A: reduction in effect size -------------------------------------------------

# Get effect size difference quantiles and frequencies from data

qtiles <- ldply(unique(dat$grp),

function(gr) quantile(round(dat$EffectSizeDifference[dat$grp==gr],digits=4),probs,na.rm=T,type=3))

freqs <- ldply(unique(dat$grp),

function(gr) table(cut(dat$EffectSizeDifference[dat$grp==gr],breaks=qtiles[gr,],na.rm=T,include.lowest=T,right=T)))

labels <- sapply(unique(dat$grp),

function(gr)levels(cut(round(dat$EffectSizeDifference[dat$grp==gr],digits=4), breaks = qtiles[gr,],na.rm=T,include.lowest=T,right=T)))

# Get regular violinplot using package ggplot2

g.es <- ggplot(dat,aes(x=grp,y=EffectSizeDifference)) + geom_violin(aes(group=grp),scale="width",color="grey30",fill="grey30",trim=T,adjust=.7)

# Cut at quantiles using vioQtile() in C-3PR

g.es0 <- vioQtile(g.es,qtiles,probs)

# Garnish (what does this word mean???)

g.es1 <- g.es0 +

ggtitle("Effect size reduction") + xlab("") + ylab("Replicated - Original Effect Size") +

xlim("Internally Replicated", "Not Internally Replicated") +

mytheme + theme(axis.text.x = element_text(size=20))

# View

g.es1

# VQP PANEL B: p-value -------------------------------------------------

# Get p-value quantiles and frequencies from data

qtiles <- ldply(unique(dat$grp),

function(gr) quantile(round(dat$ReplicationPValue[dat$grp==gr],digits=4),probs,na.rm=T,type=3))

freqs <- ldply(unique(dat$grp),

function(gr) table(cut(dat$ReplicationPValue[dat$grp==gr],breaks=qtiles[gr,],na.rm=T,include.lowest=T,right=T)))

labels <- sapply(unique(dat$grp),

function(gr)levels(cut(round(dat$ReplicationPValue[dat$grp==gr],digits=4), breaks = qtiles[gr,],na.rm=T,include.lowest=T,right=T)))

# Get regular violinplot using package ggplot2

g.pv <- ggplot(dat,aes(x=grp,y=ReplicationPValue)) + geom_violin(aes(group=grp),scale="width",color="grey30",fill="grey30",trim=T,adjust=.7)

# Cut at quantiles using vioQtile() in C-3PR

g.pv0 <- vioQtile(g.pv,qtiles,probs)

# Garnish (I still don't know what this word means!)

g.pv1 <- g.pv0 + geom_hline(aes(yintercept=.05),linetype=2) +

ggtitle("Independent replication p-value") + xlab("") + ylab("Independent replication p-value") +

xlim("Internally Replicated", "Not Internally Replicated")+

mytheme + theme(axis.text.x = element_text(size=20))

# View

g.pv1

#put two plots together

multi.PLOT(g.es1,g.pv1,cols=2)</pre>

<pre>

This is a really great analysis. As Jay van Bavel just said on Twitter, “this is genuinely disturbing”

One concern: I’m not sure how well the variables representing internal replication are coded. The study I helped replicate, “Prescribed optimism: Is it right to be wrong about the future?”, is listed as having zero internal replications. My hunch is that this is because it was a single study paper; however, this single study included conceptual replications within-participants (i.e. prescribing optimism across four different domains). To say that it wasn’t conceptually replicated misses a feature of the design. Notably, this is just one study and that won’t substantially change the results of your analysis. My concern is that there may be other similar errors.

And finally, the study I helped replicate did in fact replicate, but I suspect that it had more to do with the higher powered key test than it did with in the internal conceptual replication.

Excellent article. As a consumer of science, I have been skeptical of some of what I read of psychological research. Your article showed me why internal replication is not the solution in a very compelling way through the violin plots. The key comparison of 17 studies you offered as an alternative to the plots was not as clear to me. Nevertheless, you do a great service in sharing your thoughtful follow-up to this proposed solution to this “crisis”, and hope that you continue sharing your thoughts. Thank you.

Take 97 effects which were found to be statistically significant in the original publication. Only 36% of them were significant when replicated by independent teams. Of these successful replications, how many come from articles with internal replications? Half (17) do.

Clearer now?

The truth is psychology is not singular in its replication failure rate because replication failure is inherent in the way science unfolds, and because our replication failure rate is no different than other fields. Here is my PT blog on the topic: Here is https://www.psychologytoday.com/blog/good-thinking/201508/is-psychological-science-bad-science.

Here is a New York Times article that makes some of the same points: http://www.nytimes.com/2015/09/01/opinion/psychology-is-not-in-crisis.html?_r=0

And here is an Economist article from 2013 that details replication failures in science generally: http://www.economist.com/news/briefing/21588057-scientists-think-science-self-correcting-alarming-degree-it-not-trouble

What about doing internal replications *and* reporting all results, so no leaving out possible non-replicated studies? That, to me at least, is implied when talking about internal replications in publications. Otherwise, it makes little sense.

Could it be that papers that reported internal replications may have left out non-significant/non-replicated studies? It would be interesting to get some data on that.

Excellent post and full paper on it which I’ve recently reread (http://link.springer.com/article/10.3758/s13423-016-1030-9). I have one thought: you characterise the internal replications as conceptual and the independent replications as direct. I completely agree with the value of direct replications but I was wondering if you coded/there is a way to code for the differences in the independent replications to the original. Most were very faithful but there were a few that used different populations (e.g. from different countries) and so would not be quite the same as a direct replication which was closer to the original. Do we know if that variation could/did have an effect on the relationship between internal replication and independent replication?

[PsychBrief’s question relates to the paper he links to in which I use the result of this blog post to argue against an unknown moderator account underlying the widespread failure to replicate psychological effects and instead invoke a questionable research practice account.]

Thanks for your comment, PsychBrief.

Is there a way ‘to code for the differences in the independent replications to the original’? Yes, of course one could code all sorts of differences, e.g. different populations as you suggest. The question though is which differences are differences of importance. Given that unknown moderators are unknown I doubt that one can say a priori which differences will be important. Only dedicated theorising and testing could reveal the unknown moderators of a given effect.

Instead of revealing specific unknown moderators, I opted for a probabilistic approach. I do not aim to reveal unknown moderators but instead rely on the fact that if there are more differences between studies, it is more likely that at least one crucial difference will be included. So, I do not assume that direct replications were perfect. All I assume is that they were more direct than conceptual replications.